Authors:

Vitoantonio Bevilacqua, Angelo Antonio Salatino, Carlo Di Leo, Dario D’Ambruoso, Marco Suma, Donato Barone, Giacomo Tattoli, Domenico Campagna, Fabio Stroppa, Michele Pantaleo

Abstract:

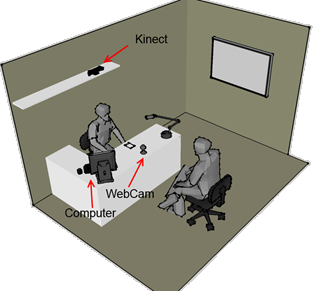

In this paper we present the results of an experimental Italian research project finalized to support the classification process of the two behavioural status (resonance and dissonance) of a candidate applying for a job position. The proposed framework is based on an innovative system designed and implemented to extract and process the non-verbal expressions like facial, gestural and prosodic of the subject, acquired during the whole job interview session. In principle, we created our own database, containing multimedia data extracted, by different software modules, from video, audio and 3D sensor streams and then used SVM classifiers that perform in terms of accuracy 72%, 79% and 63% respectively for facial, vocal and gestural features. ANN classifiers have also been used, obtaining comparable results. Finally, we combined all the three domains and then reported the results of this last classification test proving that the experimental proposed work seems to perform in a very encouraging way.